The moment that changed everything happened during a routine process review. We asked our best engineer — the person who had architected our core platform, mentored half the team, and consistently delivered the most impactful work — to take our own coding interview as a control test.

She failed. Not marginally. She scored in the bottom 30% of candidates.

The woman who had single-handedly rebuilt our payment processing pipeline, reducing transaction failures by 94%, couldn't solve a medium-difficulty dynamic programming problem in 45 minutes. She hadn't practiced LeetCode since her last job search three years ago. The algorithms she used daily — distributed consensus, eventual consistency, cache invalidation strategies — weren't on the test.

If our own interview process would reject our best engineer, the process was broken. We killed it entirely.

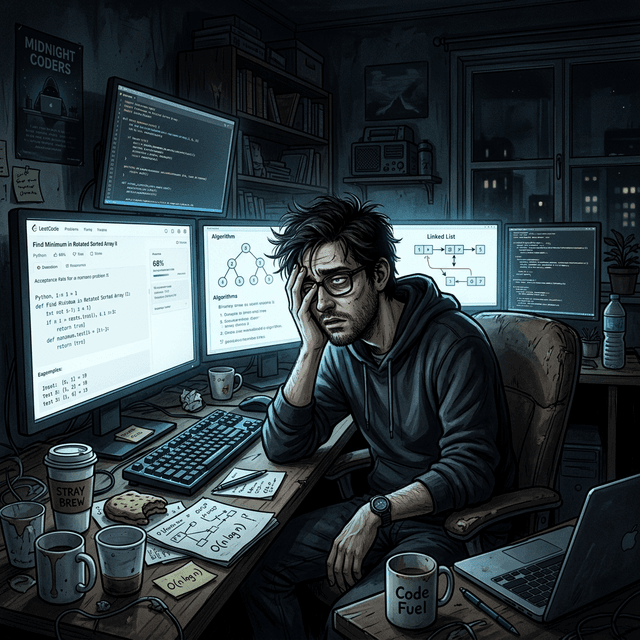

The LeetCode Industrial Complex

LeetCode-style interviews have created an entire secondary industry. Candidates spend hundreds of hours and thousands of dollars preparing for algorithmic coding interviews that have little correlation with job performance.

The preparation industry: LeetCode Premium subscriptions. AlgoExpert courses. NeetCode video series. Mock interview services. Discord study groups. Coaching services charging hundreds of dollars per session. This industry exists because technical interviews are learnable — and because learning them requires significant investment.

This investment disadvantages several groups: senior engineers who haven't interviewed recently, career changers who lack CS fundamentals but have relevant skills, engineers from non-traditional backgrounds, and anyone who can't afford to spend 200+ hours on interview preparation.

What LeetCode actually measures:

- Pattern recognition for a fixed set of ~20 algorithmic patterns

- Ability to code under time pressure while being observed

- Memorization of data structures and their time/space complexities

- Comfort with competitive programming conventions

What LeetCode doesn't measure:

- Ability to read and understand existing code (90% of engineering work)

- System design judgment in real-world constraints

- Debugging skills under production pressure

- Communication and collaboration with teammates

- Code review quality and mentorship ability

- Ability to make trade-offs with incomplete information

- Understanding of deployment, monitoring, and operational concerns

The overlap between "things LeetCode tests" and "things that make someone a good engineer" is vanishingly small.

Our New Process: Portfolio Reviews

We replaced algorithmic interviews with a three-step process that evaluates actual engineering ability:

Step 1: Portfolio Submission (async, 30 minutes of candidate time)

Candidates submit 2-3 examples of their best work. This can include:

- Open-source contributions (with links to PRs and discussion)

- Personal projects (with source code and documentation)

- Technical blog posts or documentation they've written

- Architecture documents or design proposals (anonymized if needed)

- A description of a complex problem they solved, with their approach

If a candidate doesn't have public work to share, we provide an optional take-home project: a small, realistic problem (building a CLI tool, designing an API, refactoring a messy codebase) that takes 2-4 hours. The take-home is paid at their expected hourly rate.

Step 2: Portfolio Discussion (60 minutes, with two engineers)

We review the submitted work with the candidate. This is not a presentation — it's a conversation. We ask:

- "Walk us through your decision-making on this architecture."

- "What would you do differently if you had more time?"

- "How did you handle [specific technical challenge] in this project?"

- "What trade-offs did you consider?"

- "How would this design change if the requirements scaled 10x?"

This conversation reveals depth of understanding, communication skills, and intellectual honesty in ways that no algorithm problem can. An engineer who can articulate why they chose a particular approach, acknowledge its limitations, and reason about alternatives is demonstrating real engineering judgment.

Step 3: Collaborative Debugging Session (45 minutes, with one engineer)

We present the candidate with a real bug from our codebase (sanitized to remove sensitive information). They debug it in a collaborative environment, working alongside one of our engineers.

We're not evaluating whether they find the bug (though most do). We're evaluating how they approach the problem: Do they read the error message carefully? Do they form hypotheses? Do they use debuggers or print statements? Do they ask clarifying questions? Do they communicate their thinking?

This is the most predictive component of our process. Debugging is the skill engineers use most frequently, and debugging under realistic conditions reveals capability that no contrived problem can.

Addressing the "But It Doesn't Scale" Objection

The most common criticism of portfolio-based hiring is that it doesn't scale. LeetCode problems can be administered to thousands of candidates; portfolio reviews require human judgment for each submission.

This is true. Our process handles fewer candidates per week. But here's the thing: we don't need to handle thousands of candidates. We need to identify the right 3-5 hires per quarter. Quality of signal matters more than quantity of throughput.

If your hiring funnel requires screening thousands of candidates to find 5 good ones, the problem is your funnel, not your evaluation method. We improved our sourcing (employee referrals, targeted outreach, community involvement) so that the candidates entering our process were more likely to be good fits. Fewer candidates, better signal, better outcomes.

The Results After Two Years

- Mis-hire rate: Dropped from 22% to 5%.

- Time-to-hire: Decreased from 25 days to 10 days (eliminating multi-round algorithms saved weeks).

- Diversity: Engineering team diversity improved across multiple dimensions. Removing the LeetCode barrier opened doors for non-traditional candidates.

- Candidate experience: Post-interview survey scores averaged 4.7/5, with candidates specifically praising the "realistic and respectful" process.

- Engineering quality: Code review quality improved. Architecture discussions became richer. The team's collective problem-solving ability increased.

The diversity improvement deserves emphasis. LeetCode interviews systematically advantage candidates from elite CS programs who had algorithms courses and competitive programming clubs. Portfolio reviews advantage candidates who build things — regardless of where they learned.

Conclusion

LeetCode interviews are the SAT of software engineering: a standardized test that measures preparation more than ability, that advantages privilege more than talent, and that persists because of institutional inertia rather than predictive validity.

Look at what people have built. Talk to them about how they built it. Watch them debug a real problem. You will learn more about an engineer's capability in one hour of genuine technical conversation than in ten hours of algorithmic puzzle-solving.

Written by XQA Team

Our team of experts delivers insights on technology, business, and design. We are dedicated to helping you build better products and scale your business.